Intelligent Design

Intelligent Design

Why Can’t We Explain the Brain?

Neuroscience is exciting, and frustrating, business for practitioners of Artificial Intelligence (AI), and other fields like cognitive science dedicated to reverse engineering the human mind by studying the brain. Leibniz anticipated much of the modern debate centuries ago, when he remarked that if we could shrink ourselves to microscopic size, and “walk around” inside the brain, we would never discover a hint of our conscious experiences. We can’t see consciousness, even if we look at the brain.

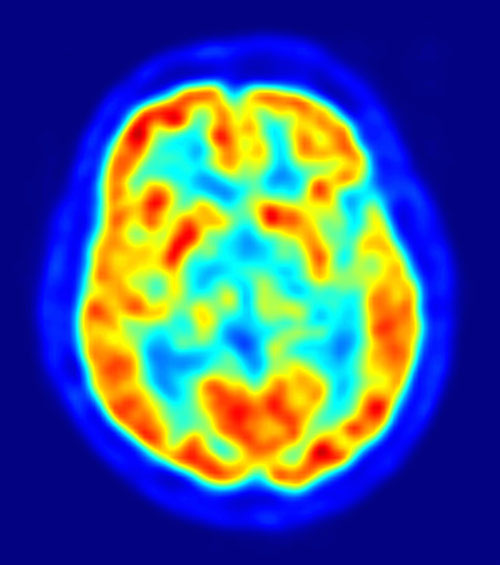

The problem of consciousness (or POC), as it is known to philosophers, is a thorny problem that unfortunately has not yielded its secrets, even as techniques for studying the brain in action have proliferated in the sciences. Magnetic Resonance Imaging (MRI), functional MRIs (fMRI), and other technologies give us detailed maps of brain activity that have proven enormously helpful in diagnosing and treating a range of brain-related maladies, from addiction to head trauma to psychological disorders. Yet for all the sophistication of modern science, Leibniz’s remarks remain prescient. If the brain is the seat of consciousness, why can’t we explain it in terms of the brain?

When I was a PhD student at Arizona in the late 1990s, many of the philosophic and scientific rock stars would gather at the interdisciplinary Center for Consciousness Studies to discuss the latest theories on consciousness. While DNA co-discoverer Francis Crick declared in The Astonishing Hypothesis that “a person’s mental activities are entirely due to the behavior of nerve cells, glial cells, and the atoms, ions, and molecules that make them up and influence them,” scientists like Kristof Koch of Caltech pursued specific research on “the neural correlates of consciousness” in the visual system, and Stuart Hameroff, along with physicist Roger Penrose, searched for the roots of consciousness at still more fundamental levels, in the quantum effects of the so-called microtubules in our brains.

When I was a PhD student at Arizona in the late 1990s, many of the philosophic and scientific rock stars would gather at the interdisciplinary Center for Consciousness Studies to discuss the latest theories on consciousness. While DNA co-discoverer Francis Crick declared in The Astonishing Hypothesis that “a person’s mental activities are entirely due to the behavior of nerve cells, glial cells, and the atoms, ions, and molecules that make them up and influence them,” scientists like Kristof Koch of Caltech pursued specific research on “the neural correlates of consciousness” in the visual system, and Stuart Hameroff, along with physicist Roger Penrose, searched for the roots of consciousness at still more fundamental levels, in the quantum effects of the so-called microtubules in our brains.

Philosophers dutifully provided the conceptual background, from Paul and Patricia Churchland’s philosophic defense of Crick’s hypothesis in eliminativism — the view that there is no problem of consciousness because “consciousness” isn’t a real scientific object of study (it’s like an illusion we create for ourselves, with no physical reality) — to David Chalmers’s defense of property dualism, to the “mysterians” like Colin McGinn, who suggest that consciousness is beyond understanding. We are “cognitively closed” to certain explanations, says McGinn, like a dog trying to understand Newtonian mechanics.

Yet with all the great minds gathered together, solutions to the problem of consciousness were in short supply. In fact the issues, to me anyway, seemed to become larger, thornier, and more puzzling than ever. I left Arizona and returned to the University of Texas at Austin in 1999, and I didn’t think much more about consciousness — with all those smart people drawing blanks, contradicting each other, and arguing over basics like how even to frame the debate, who needed me? Ten years later I checked in with the consciousness debate to discover, as I suspected, that the issues on the table were largely unchanged. To paraphrase philosopher Jerry Fodor: the beauty of philosophical problems is that you can leave them sit for decades, and return without missing a step.

Intelligence, Not Consciousness

My interest anyway was in human intelligence, not consciousness. Unlocking the mystery of how we think was supposed to be a no-brainer (so to speak). Since Turing gave us a model of computation in the 1940s, researchers in AI began proclaiming that non-biological intelligence was imminent. Fifty years ago Nobel laureate and AI expert Herbert Simon won the prestigious A.M. Turing Award as well as the National Medal of Science. That didn’t stop him from declaring in 1957 that “there are now in the world machines that think, that learn and that create,” and later to prognosticate in 1965: “[By 1985], machines will be capable of doing any work man can do.” In 1967, AI luminary Marvin Minsky of MIT predicted that “within a generation, the problem of creating ‘artificial intelligence’ will be substantially solved.”

Of course, none of this happened. And while such talk of AI is back in vogue, something is different today. The once bold, audacious claims made by Minsky and others that we would directly program a computer to think like a person are still in short supply. AI rhetoric is back, but it’s now shrouded in mystery, as if to armor its enthusiasts against further embarrassing contradictions. Today, we have “evolving intelligence” in a “global Web,” or the “intelligenization” of the world (the latter, unfortunate neologism from Wired co-founder Kevin Kelly, who also gave us the biological-sounding “Technium” label for modern technology). All this grandiose-sounding obscurity is perhaps an understandable psychological reaction to so much past failure.

The neuroscience approach to AI is something old made new again as well. D.O. Hebb first proposed that neurons could “learn” if the strength of a connection between them increased when excited. By 1962 Frank Rosenblatt has made a research program of this, and the “perceptron” was born, a simple model of the Artificial Neural Network (ANN) popularized in the 1980s and ’90s. Said Rosenblatt:

Many of the models which we have heard discussed are concerned with the question of what logical structure a system must have it if is to exhibit some property, X. This is essentially a question about a static system… An alternative way of looking at the question is: what kind of a system can evolve property X? I think we can show in a number of interesting cases that the second question can be solved without having an answer to the first.

It’s interesting to note that this approach — the early learning approach — met with immediate success but then, as limits were discovered, quickly faded from the scene. By the 1970s virtually no one thought that modeling the brain was a promising AI strategy, mostly because we didn’t have any computational models of the brain that were realistic. In a devasting critique of the so-called “one-layer” perceptron models, Marvin Minsky and Seymour Papert at MIT showed the limits of such computing models, and rallied an interest in symbolic, or knowledge-based methods in AI (so-called Good Old Fashioned Artificial Intelligence or GOFAI).

The knowledge-based methods failed, too. And so by the 1990s AI was effectively disappearing. Chastened and chagrined, researchers called it the “AI Winter” (one of two, it turns out, the other in the 1960s after the failures of machine translation systems). All this changed — and rather drastically — when the World Wide Web came on the scene. Suddenly massive datasets suitable for empirical learning work were widely available. Add to this, the simple “perceptron” models were now improved, with multi-layer neural networks and sophisticated techniques known as “back propagation.” Statistics — not “knowledge” — showed renewed promise, and so Phoenix-like, the idea of a learning, evolving AI along with its (vague) similarity to the brain and biological systems picked up speed, and indeed dominated discussions of AI by the turn of the century.

Enter Modern AI, which has essentially two active programs. On the one hand, the idea is to inspect the brain for hints into AI. We want to, in effect, reverse-engineer intelligence into artifacts by studying the brain. On the other, we view intelligence as an emergent phenomenon of complex systems. In the dry-eyed version of this, we have Big Data, where massive datasets empower an emerging intelligence using statistics and pattern-matching. And the “true believers” version sees intelligence literally emerging out of the complexity of the World Wide Web and all its connections, just as human life supposedly evolved from the complexity of non-life in the world around us. These latter ideas cover a lot of ground, so we can set them aside for now. Our interest at present is in the neuroscience approaches to AI, a program that, admittedly, has a certain plausibility to it, on its face. And in the wake of the failures of traditional symbolic AI, what else do we have? It’s the brain, stupid.

Well, it is, but it’s not. Mirroring the consciousness conundrums, the quest for AI now anchored in brain research appears destined to the same hodge-podge, Hail Mary!-type theorizing and prognosticating as consciousness studies. There’s a reason for this, I think, which is again prefigured in Leibniz’s pesky comment.

Some Theories Popular Today

Take Jeff Hawkins. Famous for developing the Palm Pilot and as an all around smart guy in Silicon Valley, Hawkins dipped his toe into the AI waters in 2004 with the publication of his On Intelligence, a bold and original attempt to summarize the volumes of neuroscience data about thinking in the neocortex with a hierarchical model of intelligence. The neocortex, Hawkins argues, takes input from our senses and “decodes” it in hierarchical layers, with each higher layer making predictions from the data provided by a lower, until we reach the top of the hierarchy and some overall predictive theory is synthesized from the output of the lower layers. His theory makes sense of some empirical data, such as differences in our responses based on different types of input we receive. For “easier” predictive problems, the propagation up the neocortex hierarchy terminates sooner (we’ve got the answer), and for tougher problems, the cortex keeps processing and passing the neural input up to higher, more powerful and globally sensitive layers. The solution is then made available or passed back to lower layers until we have a coherent prediction based on the original input.

Hawkins has an impressive grasp of neuroscience, and he’s an expert at using his own innovative brain to synthesize lots of data into a coherent picture of human thinking. Few would disagree that the neocortex is central to any understanding of human cognition, and intuitively (at least to me) his hierarchical model explains why we sometimes pause to “process” more of the input we’re receiving in our environments before we have a picture of things — a prediction, as he says. He cites the commonsense case of returning to your home and having the doorknob moved a few inches to the left (or right). The prediction has been coded into lower levels because benefit of prior experience has made it rote, we open the door again and again, and the knob is always in some one place. So when it’s moved slightly, Hawkins claims, the rote prediction fails, and the cortex sends the visual and tactile data further up the hierarchy, until the brain gives us a new prediction (which in turn will spark other, more “global” thinking as we search for an explanation, and so on).

Ok. I’ll buy it as far as it goes, but the issue with Hawkins, like with the consciousness debates, is that it doesn’t really “go.” How exactly are we intelligent, again? For all the machinery of a hierarchical model of intelligence, “intelligence” itself remains largely untouched. Offering that it’s “hierarchical” and “located in the neocortex” is hardly something we can reverse engineer, any more than we can explain the taste of a fine red wine by pointing to quantum events in microtubules. “So what?” one might ask, without fear of missing the point. To put it another way, we don’t want a brain-inspired systems-level description of what is happening in the brain when we act intelligently — when we see what is relevant in complex, dynamic environments — we want a systems description of how the “box” itself works. What’s in the box? That’s the theory we need, but it’s not what we get from Hawkins, however elaborate the hierarchical vision of the cortex might be.

The Facile Nature of AI Theories

The facile nature of such theories has been noticed by other researchers, as well. As NYU cognitive psychologist Gary Marcus has pointed out, Hawkins’s hierarchical-structure theory of intelligence inspired Ray Kurzweil’s latest theory, too. Kurzweil expounds on Hawkins’s speculations in his 2012 How to Create a Mind. But, like Hawkins, Kurzweil too seems to offer a hand-waving theory of AI based on vague insights about the brain. Says Marcus:

We already knew that the brain is structured, but the real question is what all that structure does, in technical terms. How do the neural mechanisms in the brain map onto the brain’s cognitive mechanisms?

And Marcus goes on to point out that such theories are entirely too generic to really advance the ball on AI:

Almost anything any creature does could at some level be seen as hierarchical-pattern recognition; that’s why the idea has been around since the late nineteen-fifties. But simply asserting that the mind is a hierarchical-pattern recognizer by itself tells us too little: it doesn’t say why human beings are the sort of creatures that use language (rodents presumably have a capacity for hierarchical-pattern recognition, too, but don’t talk), and it doesn’t explain why many humans struggle constantly with issues of self-control, nor why we are the sort of creatures who leave tips in restaurants in towns to which we will never return.

Don’t take my word here — or Marcus’s. To prove my point, just do what Hawkins suggests. Take his entire theory and code it up in a software system that reproduces exactly the connections he specifies. What’s likely to happen? The smart money says “not much,” because such systems have in fact been around for decades in computer science (Marcus says the 1950s, though you could draw the line further back), and we already know that such systems-theories don’t provide the underlying juice — whatever it is — to actually reproduce thinking. If they did, the millions of lines of code we’ve generated implementing difference AI “architectures” inspired by the brain would have hit on it. Something more is going on, clearly. (As it turns out, Hawkins himself has largely proved my point. He launched a software company that provides predictions of abnormal network events — for security purposes — using computational models inspired by his research on the neocortex. Have you heard of the company? Me neither, until I read his web page. Not to be cruel, but if he decoded human intelligence in a programmable way, the NASDAQ would have told us by now.)

I’m not picking on Hawkins. Let’s take another popular account, this time from a practicing neuroscientist, all around smart guy David Eagleman. Eagleman argues in his 2012 Incognito that the brain is a “team of rivals,” a theory that mirrors AI pioneer Marvin Minsky’s agents-based approach to reproducing human thought in his Society of Mind (1986), and later The Emotion Machine (2006). The brain reasons and thinks, claims Eagleman, by proposing different interpretations of sense data from our environment, and through refinement and checking against available evidence and pre-existing beliefs, allowing the “best” interpretation to win out. Different systems in the brain provide different pictures of reality, and the competition among these systems yields stable theories or predictions at the level of our conscious beliefs and thoughts.

Like Explaining a Magic Trick?

This is a quick pass through Eagleman’s ideas on intelligence, but even if I were to dedicate several more paragraphs of explanation, I hope the reader can see the same problem up ahead. One is reminded of Daniel Dennett’s famous quip in his “Cognitive Wheels” article about artificial intelligence, where he likens AI to explanations of a magic trick:

It is rather as if philosophers were to proclaim themselves expert explainers of the methods of a stage magician, and then, when we ask them to explain how the magician does the sawing-the-lady-in-half-trick, they explain that it is really quite obvious: the magician doesn’t really saw her in half; he simply makes it appear that he does. ‘”But how does he do that?” we ask. “Not our department,” say the philosophers — and some of them add, sonorously: “Explanation has to stop somewhere.”

But the “team of rivals” explanation has stopped, once again, before we’ve gotten anywhere meaningful. Of course the brain may be “like this” or it may be “like that” (insert a system or model); we’re searching for what makes the systems description work as a theory of intelligence in the first place. “But how?” we keep asking (echoing Dennett). Silence.

Well, not to pick on Eagleman, either. I thoroughly enjoyed his book, at least right up to the point where he tackles human intelligence. It’s not his fault. If someone is looking at the brain to unlock the mystery of the mind, the specter of Leibniz is sure to haunt him, no matter how smart or well informed he may be. The issue with human intelligence is not the sort of thing that can be illuminated by poking around the brain to extract a computable “system” — the systems-level description gives us an impressive set of functions and structures that can be written down and discussed, but it’s essentially an inert layer sitting on top of a black box. Again, it’s what’s inside the box that we want, however many notes we scribble on its exterior.

This admittedly brief tour through neuroscience-inspired theories of AI should make clear the profound challenges the field still faces. Like the problem of consciousness, the problem of intelligence seems almost hopelessly abstruse and difficult to decode, using the tools of a mechanistic science. If this sounds overly pessimistic, I’d like to end by considering some results from the history of science.

The Incompleteness Theorems

The famous mathematical logician Kurt G�del proved in 1931 an utterly startling, and now famous result about formal systems, called the Incompleteness Theorems. G�del put to rest a long time dream of mathematicians to reduce mathematics to logic — numbers to formal, computable systems. G�del showed that any formal system complex enough to be interesting (containing the “Peano” axioms for addition, basically) would have fundamental limitations — it cannot be both consistent and complete. His result implies that mathematical thinking lies outside the scope of logical proof (and hence computation itself), no matter how complex the logical formalism one uses.

Here’s the point. Far from shutting down further research in mathematics, G�del’s result arguably paved a path to modern computation. Turing published his Halting Problem results based on G�del’s result, and later provided the theoretical model (the Turing Machine) for universal computing machines. Not bad for a limiting result.

One might make similar observations about, say, Heisenberg’s Uncertainty Principle, where either the position or the momentum of a photon can be measured, but not both. Again, this is a limitation, but it’s been a boon to further research in quantum mechanics. Limitations are often productive in ways that we can’t predict. So the question isn’t about disguising or ignoring the manifest problems with neuroscientific theories of AI in the name of progress. The history of science suggests that our limitations, once acknowledged, may in fact prove vastly more productive in the long run than continuing to make the same errors, and hype the same bad theories.

We may, in other words, simply be on the wrong path. That’s not a limitation. It’s knowledge.

Founder and CEO of a software company in Austin, Texas, Erik Larson has been a Research Scientist Associate at the IC2 Institute, University of Texas at Austin, where in 2009 he also received his PhD focusing on computational linguistics, computer science, and analytic philosophy. He now resides in Seattle.

Image: PET image of the human brain/Wikipedia.